Best PC platform for running Esxi/Docker at home?

-

I get that efficieny is important for a device that runs 24/7, but looking at the options you have with a 5800U, I think you sacrifice a lot. How do you add hard drives for storage, via USB3? What about RAID? How do you know if the laptop's thermal design is sufficient for 24/7 uptime?

Do not focus too much on the nominal TDP. It is basically just an approximation for the cooling solution required to achieve the rated performance of the CPU. In the specific case of AMD Ryzen CPUs, the rated TDP roughly equals the power draw in P0 state, which is the highest active power state the CPU can be in.

For example, a Ryzen 5 5600X rated at 65W may draw 60 to 75W (once the thermal limit is reached and everything is settled in) under high demanding workloads (prime95 and such). Modern CPUs are smart enough to switch to quite efficient P-states when they have nothing to do, lowering the clock to the sub-GHz range, drastically reducing the core voltage. So if the CPU is idling, it may only draw 10 to 15% of the rated TDP - and a home server will likely idle 95% of the time.

At this point, other components (mainboard chipset, hard drives and other peripherals, power losses of the power supply, ...) might start to contribute more to the total power consumption of the PC than the CPU itself. And I haven't even mentioned that you could underclock (which doesn't void warranty) the CPU the increase the efficiency even further.

-

@BearWithBeard said in Best PC platform for running Esxi/Docker at home?:

Modern CPUs are smart enough to switch to quite efficient P-states when they have nothing to do, lowering the clock to the sub-GHz range, drastically reducing the core voltage. So if the CPU is idling, it may only draw 10 to 15% of the rated TDP - and a home server will likely idle 95% of the time.

I can believe that may be true of some CPU's, but I wonder if that really does hold true for the Ryzen 5 5600X. Instead of a min, max, and "default" TDP, it's specs list just one TDP at 65w:

https://www.amd.com/en/products/cpu/amd-ryzen-5-5600xIn contrast, the ryzen 7 5800u gives a range of 10-25w, with a "default" of 15w:

https://www.amd.com/en/products/apu/amd-ryzen-7-5800uThat said, maybe there's a better place I could look where I could confirm that it draws only 10 to 15% of its nominal power draw while idling?

I do very much like that the Ryzen 5 5600X has a very good single-thread passmark score for its roughly $299 price, which is quite favorable compared to much of its competition. If its power draw really were 10-15% of its nominal 65w about 95% of the time, then I think it would be a great choice. But how to confirm that without actually buying it and testing it? Surely it must be documented somewhere. But where exactly?

-

The cTDP is generally only provided for APUs (CPU + GPU on a single chip). It allows OEMs to easily limit the power draw (mainly the all core boost clock) to match the CPU to their thermal design requirements. That's why two equally equipped notebooks can have vastly different performance results. It's a pity that OEMs almost never advertise their cTDP setting.

The desktop CPU market is a little different. Thermal design isn't that critical, CPUs are also sold directly to end users, etc. While it may depend on the mainboard manufacturer to enable the cTDP setting in their UEFI, it is generally possible to adjust it on desktop Ryzens as well. Many do that to find their efficiency sweet spot. I guess nothing could stop you from setting a 5600X's cTDP to 15W. That's what I did with my Athlon as well, albeit only from 25W to 15W. Besides the cTDP, there are other ways to increase efficiency: Lowering the PPT threshold, setting a negative vCore offset, etc. But that whole topic is too complex to explain or discuss here. You may search on PC hardware websites like AnandTech, Tom's Hardware, GamersNexus, Guru3D and such for more details if you're interested.

Here's a 5600X review by Tom's Hardware. They suggest that it draws 13W in idle at stock settings, which are 20% of the nominal 65W TDP. Other reviewers may get different results, due to hundreds of different combinations of configurations, hardware choice and measurement methods. I assume that you can reduce those 13W even further with some adjustments, but don't take my word on that. Unfortunately, I don't own a Ryzen right now to test that myself.

That being said, I don't think you can realistically underclock a desktop CPU to match the power draw of their mobile pendants. So the mobile CPU may always be more economical.

Maybe the embedded lineup of CPUs like the Intel Xeon D or AMD V / Epic Embedded may suit you? They are generally more targeted towards industrial use and have far more connectivity options than those NUC-style mini PCs or notebooks. Commercial NAS vendors like Synology use those. Unfortunately, at first glance, it looks like both Intel and AMD solutions seem rather outdated at the moment.

-

Look at this thread, if you haven't already: https://forums.serverbuilds.net/t/guide-nas-killer-4-0-fast-quiet-power-efficient-and-flexible-starting-at-125/

-

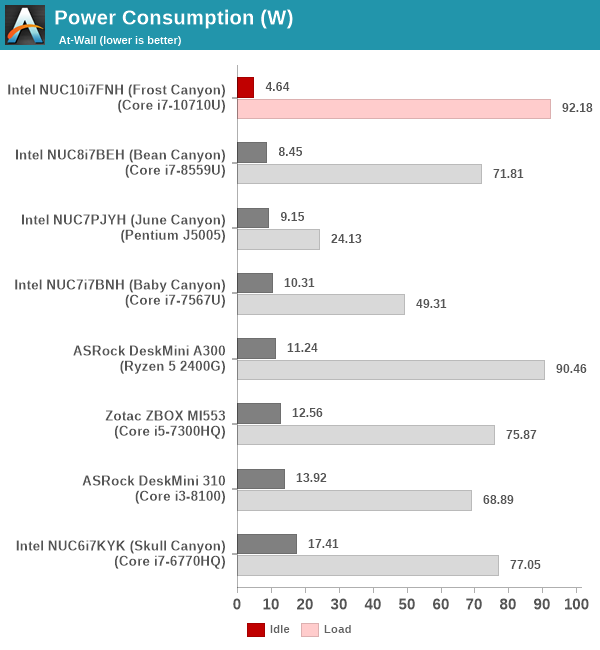

Results are all over the map, but it looks as though idle generally is low across a range of platforms:

These are all mini-pc's, so it's not surprising that the idle power numbers are low.At any rate, it looks as though the idle wattage for just the Ryzen 5 5600X cpu is around 26 watts:

Not particularly awesome, but it can do a lot more work when needed than the mini-pc's above.

26w is manageable with a quiet fan. Total system draw will, of course, be a higher number.And here are the loaded numbers for contrast and comparison:

image url)

image url)

source: https://thinkcomputers.org/amd-ryzen-5-5600x-processor-review/8/

-

@NeverDie said in Best PC platform for running Esxi/Docker at home?:

At any rate, it looks as though the idle wattage for just the Ryzen 5 5600X cpu is around 26 watts:

See what I mean with "other reviewers may get different results"? ThinkComputers says 26W idle. Tom's Hardware says 13W. AnandTech says 11W. TPU says 50W (whole system). Who is right? It's unfortunate that there is no standard testing procedure every reviewer adheres to.

Part of the different results may be due to mainboard selection. Some (I don't know how many and if they are rare or the majority) AM4 mainboards seem to be rather power hungry. Especially those with an X570 chipset. Some may add another 10 to 20W to the total power consumption, even if you use the same CPU. See here for example.

26w is manageable with a quiet fan.

Oh yeah, absolutely! Even under load. My 80W Xeon sitting right next to me here is inaudible for most people unless they hold their ear close to the PC case.

Oh, and please note that I'm not trying to sell you the 5600X just because I used it as a comparison a few times. IMHO, all CPUs in that category are grossly overpowered for the average home server unless you know that you will need it sooner or later.

-

It seems that a minimum of 3 disks is required for running ProxMox: one to boot from, one for ISO's, and one for VM's. I'm surprised it's that literal and not able to partition one disk into 3 equivalent disks. It advises not to use a USB flash for the boot disk.

-

@NeverDie I use proxmox with 2 drives (in mirror, so proxmox only sees 1 drive).

-

Well, good to know for the future. Right now I'm not finding good beginner instructions for a single drive system, or even a two drive system, so I guess I'll just use a cheap SSD over USB 3.0 as the bootdrive. I'll add a second SSD to my Intel NUC, and in theory that should work well enough in the short-term to evaluate ProxMox.

Presently, running just ProxMox with no VM's and no load: it's drawing 8 watts at idle on on an Intel NUC-6i5SYS (that's a i5-6260u CPU) with 16GB memory and a 1TB Samsung NVME. After adding a SATA SSD and a USB SSD, it should meet the nominal minimum criteria for ProxMox's beginner setup. Not an ideal arrangement, so I'll consider something bigger with more drive bays as a better setup in the future.

-

@NeverDie it certainly is possible

-

On second thought, I'll try ProxMox on a system I previously built for Esxi that has 9 drives:

Out with the old hypervisor and in the with the new! This way I'll have plenty of drives to try BTRFS in a Linux VM and whatever else.

-

@NeverDie looks like a Fractal Design Refine case?

-

@mfalkvidd said in Best PC platform for running Esxi/Docker at home?:

@NeverDie looks like a Fractal Design Refine case?

Nope. It's the Nanoxia Deep Silence 6 Super Tower Case. It promised the most soundproofing of nearly any computer case on the market at the time, and the large size makes wiring things up easier than working in cramped quarters. That was six years ago, though, so maybe there's something better available now.

The one case that stood out as being better was one that was completely sealed and which had a lot of heatsink fins milled into the outside of its case. Being sealed, I've got to believe it would have had the best soundproofing by far. It looked beautiful but was hugely expensive.

I imagine these days a bunch of M.2. nvme's would be the way to go--faster, smaller, and less power.

I recently set up a Ryzen 3 3100 as a gaming computer for my son, and it's nearly silent even with just a cheap run-of-the-mill computer case. Come to think of it, maybe later tonight I should test its at-the-wall power draw when it's idle....

-

@NeverDie said in Best PC platform for running Esxi/Docker at home?:

Ryzen 3 3100 as a gaming computer

Reporting back: the entire computer draws 60 watts from the wall when idle, whereas it draws 305 watts if running a fractal graphics benchmark. The takeaway is that even 60 watts can still be nearly silent if the fans and power supply are carefully chosen to be quiet. This particular build uses a refurbished Corsair Ax860i for the power supply, and so at 60 watts its fan doesn't even spin. For the case fan it uses a Phantek PH-F120MP_BK_PWM, which is a very quiet fan and which furthermore spins only when the motherboard gets warm. The CPU fan is just the stock AMD Wraith Stealth cooler that came packaged with the CPU, but also with PWM controlled by the motherboard.

-

Building quiet PCs is so addictive. Once you're used to it, every little rattling becomes an annoyance. It didn't took long until I had to get rid of all spinning HDDs in favor of SSDs. And as soon as everything was dead silent... I bought myself a clicky mechanical keyboard, oh well..

Nanoxia Deep Silence and Fractal Design Define are indeed nice sound-insulated cases for quiet builds with plenty of space and features.

For silent cooling, I can recommend be quiet (especially the Silent Wings fans) and Noctua (basically everything). The latter is quite pricy though.

-

@NeverDie said in Best PC platform for running Esxi/Docker at home?:

It seems that a minimum of 3 disks is required for running ProxMox: one to boot from, one for ISO's, and one for VM's. I'm surprised it's that literal and not able to partition one disk into 3 equivalent disks. It advises not to use a USB flash for the boot disk.

Not true. I have 1 SSD running proxmox. And two more disks passed trough to vm's for storage, but the proxmox part is entirely on the small SSD. It is just partitioned using LVM.

-

@monte said in Best PC platform for running Esxi/Docker at home?:

It is just partitioned using LVM

@monte How did you do it? Did you pre-partition the disk before installing Proxmox, and then Proxmox just found what you did and adopted it, or is it more involved than that?

I started with the ProxMox beginner video, which used 3 disks and gave no hints on how to use fewer:

-

@NeverDie to be honest, I don't rememver

It was so long ago, I was just updating the system from then. But I guess there might be an option in the installer... I will try it in vm, you made me curious

It was so long ago, I was just updating the system from then. But I guess there might be an option in the installer... I will try it in vm, you made me curious

UPDATE: I've just ran the install and it plain and simple, no extra options. It automatically partitioned the disk. The only drawback is that you don't have ability to fine tune the sizes of partitions, but that can be done later with some LVM magic

-

@monte said in Best PC platform for running Esxi/Docker at home?:

@NeverDie to be honest, I don't rememver

It was so long ago, I was just updating the system from then. But I guess there might be an option in the installer... I will try it in vm, you made me curious

It was so long ago, I was just updating the system from then. But I guess there might be an option in the installer... I will try it in vm, you made me curious

UPDATE: I've just ran the install and it plain and simple, no extra options. It automatically partitioned the disk. The only drawback is that you don't have ability to fine tune the sizes of partitions, but that can be done later with some LVM magic

That's what I started with too, but then when I went to upload ISO's or create VM's, ProxMox said there was no disk available for that.

-

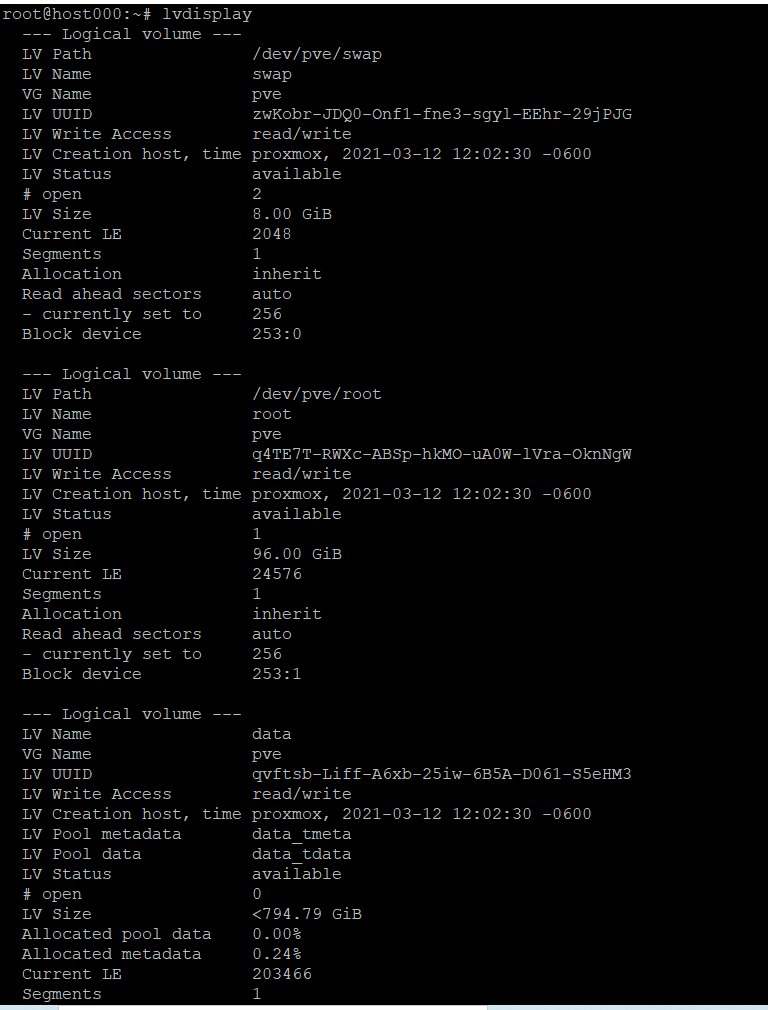

So to be precise, Proxmox installer creates LMV VG (volume group) called

pve, in which it creates LV(logic volume)root,swapand andother volume, which in fact is a pool for another thin volumes that will be created for each VM and container, calleddata. So ISO's, backups and other stuff related to proxmox itself will be located atrootvolume.

-

@NeverDie that's strange. What's the size of your disk? Also you can see LVM structure with

lvdisplaycommand. As I've mentioned, you also can manage sizes of said LV's in the console.

-

@monte said in Best PC platform for running Esxi/Docker at home?:

What the size of your disk?

1 terabyte. It's a Samsung nvme SSD.

-

I've tried googling up instructions on how to do it exactly. It seems that others besides just me have struggled with this as well. The only solution proposed which someone claimed worked, which I haven't yet tried, seems to be to start with a Debian install and then upgrade it to ProxMox. But there were no simple 123 instructions on how to do that either, so I just threw in the towel and decided to go with 3 disks, like in the beginner tutorial.

-

@NeverDie this is completely doable within proxmox installation. Show me please the return of

lvdisplay.

Or if you already have set up your system, then let it be

-

@monte Getting it now. It will look a little screwy because the present incarnation has proxmox on a USB (yeah, I know, heavily not recommended).

-

Better yet, I'll reinstall it to the one 1Tb disk and you the lvdisplay of that, which will make more sense in this context.

-

OK, did that.

This is with proxmox installed to the single 1 Tb disk. No USB's involved.

-

@NeverDie seems to me that 96GB is plenty of space for ISO's

-

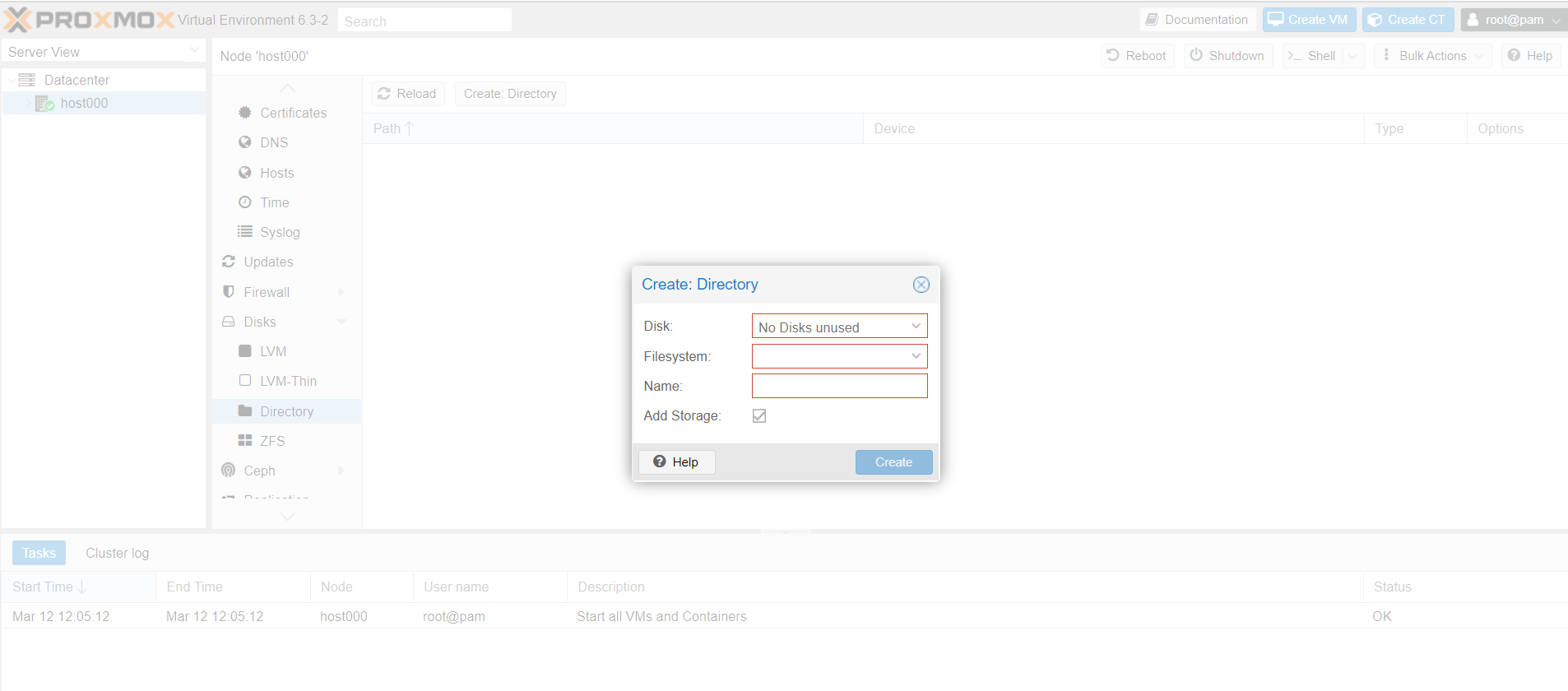

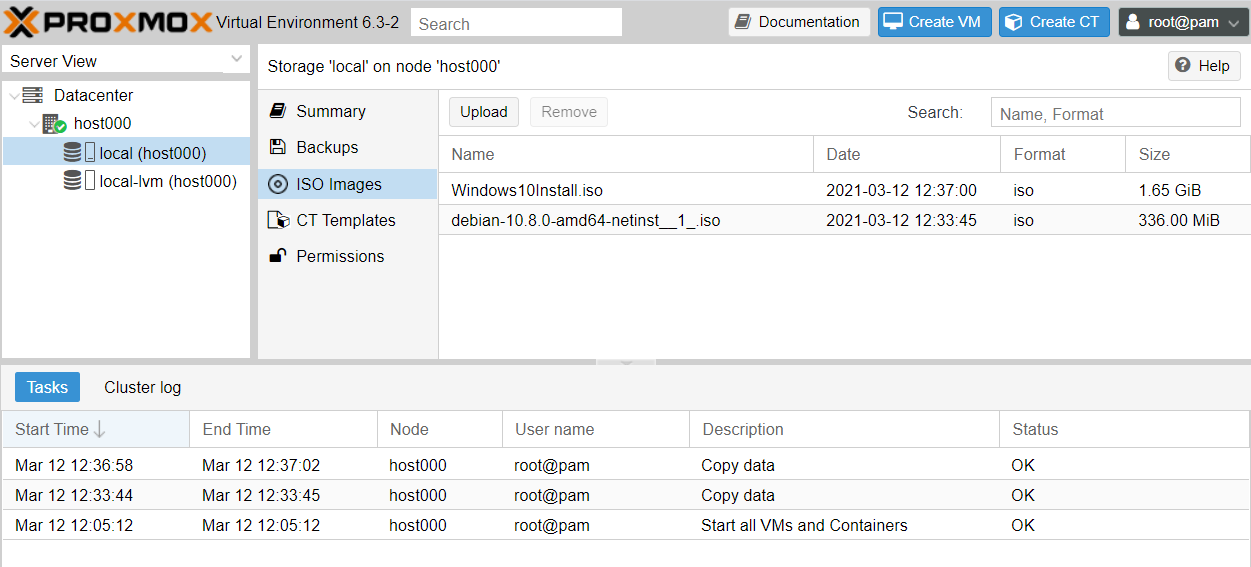

@monte Right. But then I get this:

which is where I get stuck. If I add more physical disks, then this doesn't happen.

-

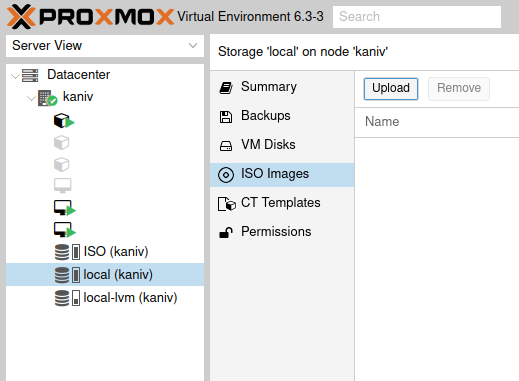

@NeverDie expand

host000item in the list on the left. Choose storagelocalat the end of the list, chooseISO Images, hitUpload.

Then press create VM on the top right of the GUI.

-

@monte Thank you! That worked:

I uploaded both debian and windows iso's.

So, after starting the VM I gather I just click "monitor" to see the VM's virtual display?

-

@NeverDie no, you use

Consolejust belowSummarybutton.

I must add, I never installed windows in proxmox, so I can't say how install process is managed with it, but I think someone on google knows

-

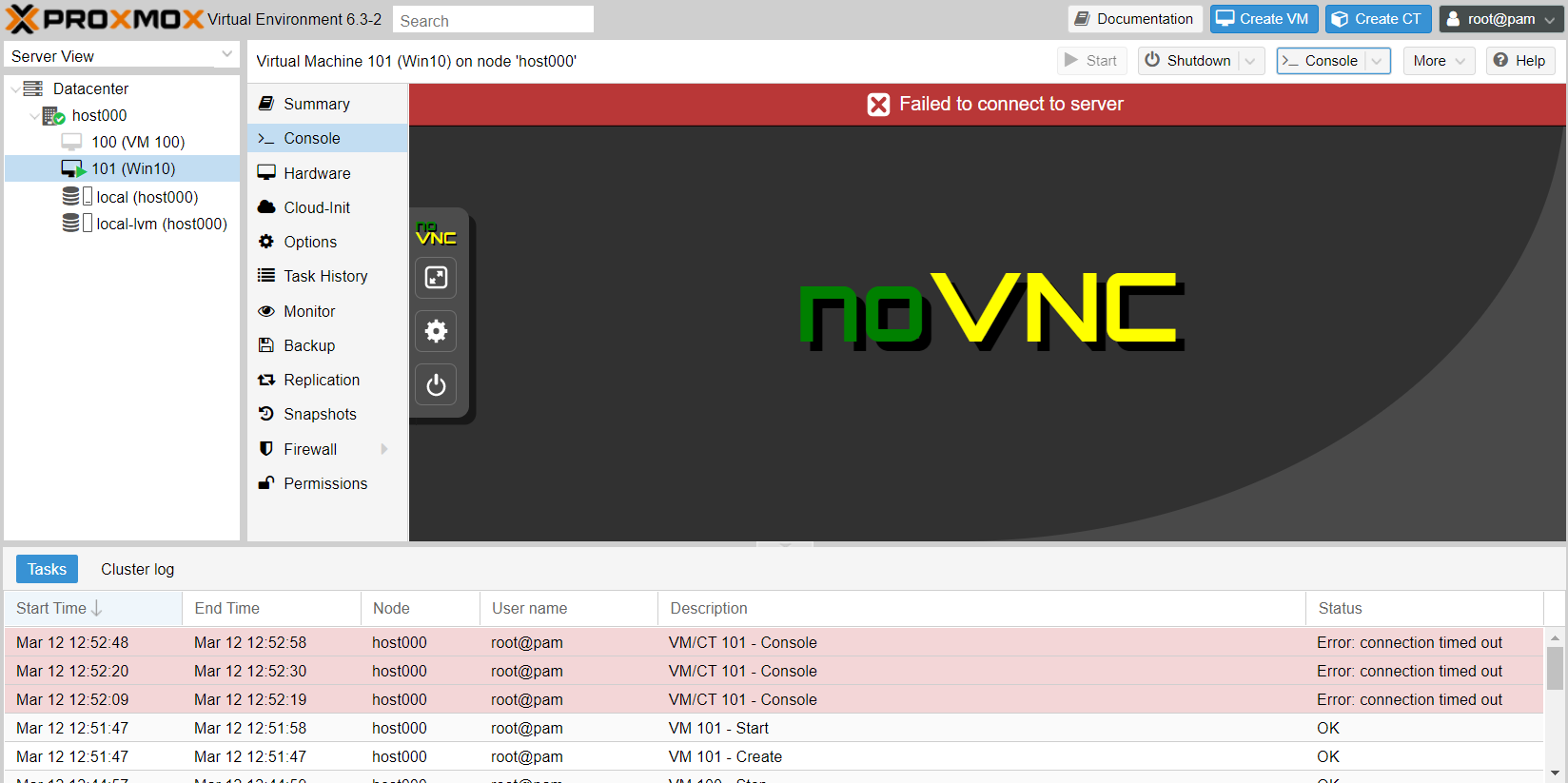

@monte Right. Just tried it, but got this:

Well, I won't burden you with more questions. Thanks for your help!

-

@NeverDie first video in search How To Create Windows 10 Virtual Machine on Proxmox – 17:36

— PhasedLogix IT Services

-

Reporting back: After trying many things and much frustration

, It turned out the reason for the noVnc console fail was, of all things, Google Chrome.

, It turned out the reason for the noVnc console fail was, of all things, Google Chrome.

Who'd have guessed? Thank you Google.

Too bad there's no emoji for sarcasm.

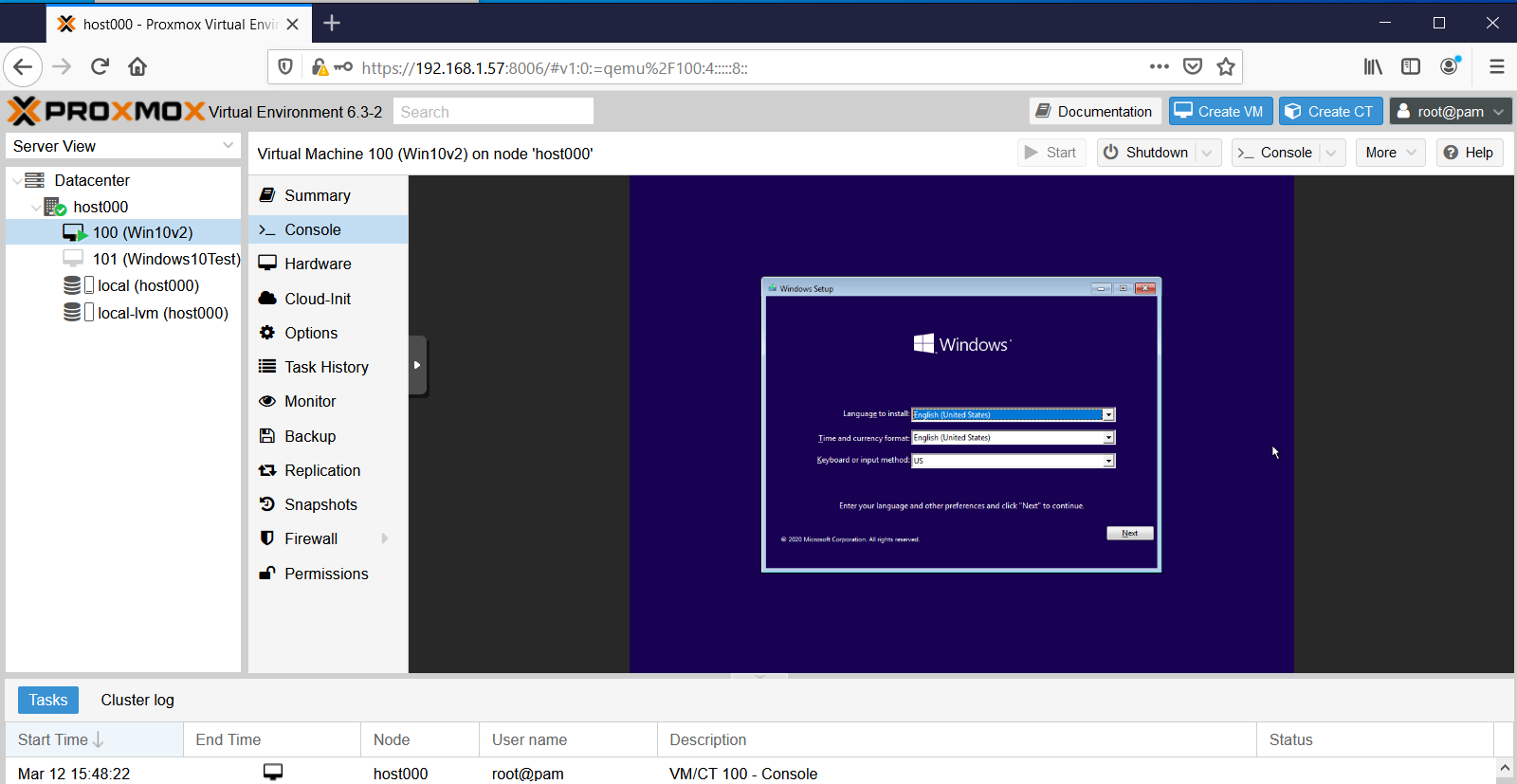

Too bad there's no emoji for sarcasm.  Actually, I like Google Chrome a lot, so I hadn't even suspected it. Switching to Firefox fixed the issue:

Actually, I like Google Chrome a lot, so I hadn't even suspected it. Switching to Firefox fixed the issue:

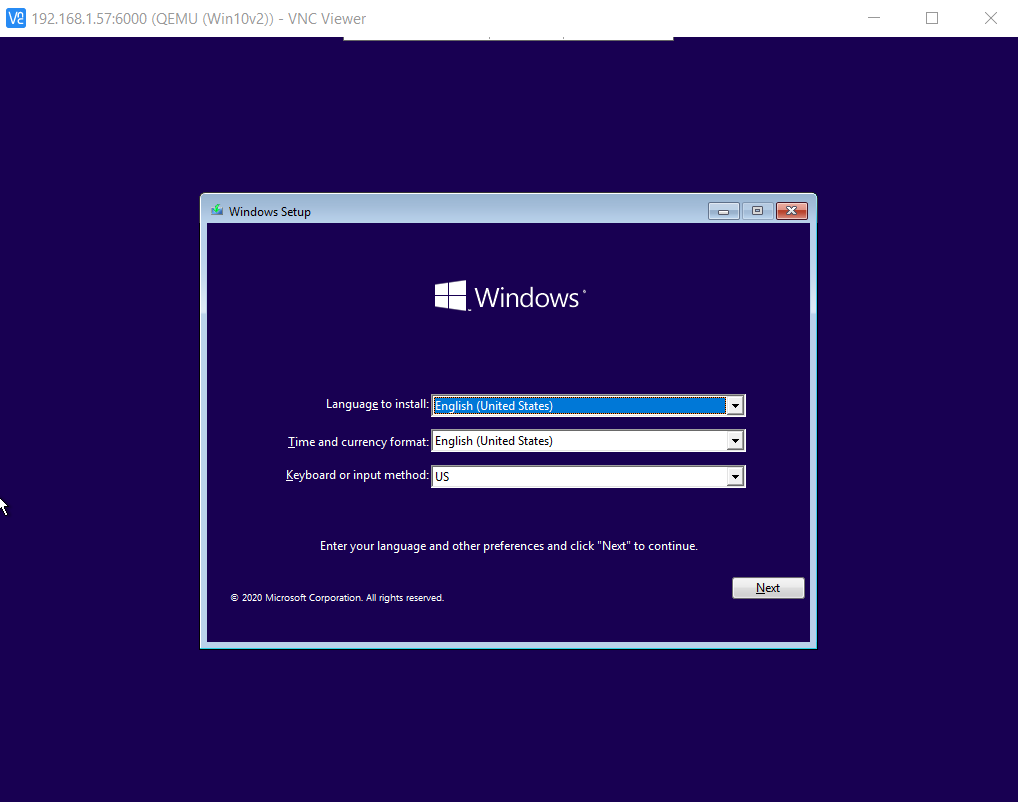

I'm also able to connect to it using VNC Viewer:

by typing:

change vnc 0.0.0.0:100

into the VM's monitor window, as described here: https://pve.proxmox.com/wiki/VNC_Client_Access#Standard_Console_AccessI even tried installing the noVNC google chrome extension, but still no joy. No idea as to why Chrome failed for this, but at least now the culprit is identified.

-

@NeverDie I am happy, that you've made a progress! I couldn't help you with that, cause I am using Firefox by default

Strange, I've just tried in Chromium with Lubuntu as guest OS and it works nice...don't have Chrome installed, so can't check the exact issue you have.

-

To save searching time for anyone else who also wants to run a Windows VM on ProxMox, I found that this guy has the most concise yet complete setup instructions:

Virtualize Windows 10 with Proxmox VE – 12:03

— Techno Tim

-

A couple more gotcha's I ran across.

-

To get balloon memory working properly, you still need to go through the procedure that starts at around timestamp 14:30 on the youtube video that @monte posted above, where you use Windows PowerShell to activate the memory Balloon service.

-

Interacting directly with the Window's VM using just VNC is sluggish. Allegedly the way around this is to use Windows Remote Desktop, rather than VNC, to connect to the Windows VM. However, here's the gotcha if you opt to go that route: the windows VM needs to be running Windows Pro. It turns out that Windows Home doesn't support serving Windows Desktop from the VM.

Gotcha!

Gotcha!

-

-

Reporting back: I've been able to create a a ZFS RAIDZ3 pool on my proxmox server, which is a nice milestone. I also just today discovered that fairly easily during installation the ProxMox bootroot can also be installed onto a ZFS pool of its own--pretty cool!

--which means the root itself can be configured to run at RAIDZ1/2/3, offering it the same degree of protection as the data entrusted to it . A reputable 128GB SSD costs just $20 these days, so I'll try combining four (4) of them as a RAIDZ1 boot disk for the root. I'd settle for 64GB SSD's or even less, but, practicially speaking, 128GB seems to be the new minimum for what's being manufactured by the reputable brands. Used 32/64GB SSD's on ebay are on offer for nearly the same price, or even more, than brand new 128GB SSDs. Go figure. It makes no sense if you aren't beholden to a particular part or model number.

--which means the root itself can be configured to run at RAIDZ1/2/3, offering it the same degree of protection as the data entrusted to it . A reputable 128GB SSD costs just $20 these days, so I'll try combining four (4) of them as a RAIDZ1 boot disk for the root. I'd settle for 64GB SSD's or even less, but, practicially speaking, 128GB seems to be the new minimum for what's being manufactured by the reputable brands. Used 32/64GB SSD's on ebay are on offer for nearly the same price, or even more, than brand new 128GB SSDs. Go figure. It makes no sense if you aren't beholden to a particular part or model number.All this means that I'll have a zpool boot drive with effectively 3x120GB=360GB of useable space, so that will be plenty to house ISO's and VM's as well, which means that from the get-go and onward everything (the root, the ISO's, the VM's, as well as the files entrusted to them) will be ZFS ZRAID protected. Pretty slick!

I suppose the next thing after that would be to build a ZRAID nvme cache to hopefully get a huge jump in speed. Most motherboards aren't blessed with 4 nvme M.2 slots, but there do exist quad nvme combo boards so that four nvme SSD's that can be slotted into a single PCIE-3 slot.

-

It turns out that to get full advantage of running four nvme SSD drives you'd need at minimum pci-e 3.0 x16 with motherboard support for bifurcation, and mine is just short of that with pci-e 3.0 x8. Nonetheless, the max speed for pci-e 3.0 x8 is 7880MB/s, which is 14x the speed of a SATA SSD (550MB/s), and more than enough to saturate even 40GE ethernet. So, for a home network that suggests running apps on the server itself in virtual machines and piping the video to thin clients through something like VNC. I figure that should be noticeably faster performance for everyone than the current situation, which is everyone running their own PC. How well that theory works in practice.... I'm not sure.

-

Final report: I tried it out, but having to run a Windows thin-client to connect via Windows Remote Desktop to a Windows VM running on a server was a buzzkill. The hardest case I've tried is watching high definition youtube videos. The server can handle the workload even without a graphics card and still not max out the CPU cores. It works, but the visual fidelity goes down--I guess because it's first displayed on a VM screen inside the VM on the server and then re-encoded and pumped to the thin-client? Not sure if that's what's actually happening but if so maybe there's a way to around that that doesn't inolve a re-encoding. Ideally, being able to losslessly connect to any VM from, say, a chromebook would have been nice.

Lastly, I have been able to move VM's from one physical machine to another and get them to run. Maybe I'm doing it wrong, but for my first attempt I did it by first "backing up" the VM to the server using ProxMox Backup Server, and then "restoring" the VM to the target machine (in my test case, the same physical machine as the one running ProxMox Backup Server). Doing it this way I encountered two bottlenecks: #1. moving the VM over 1 gigabit ethernet takes a while. However, upgrading to 10-gigabit ethernet or faster might address that. #2 Even longer, than #1, the "restoring" process takes quite a while, even though in my test case it didn't involve sending anything over ethernet. This was a surprise. I'm not sure why the "restoring" takes so long, but it takes much longer than doing the original backup. Unless the "restoring" is doing special magic to adjust the VM to run on the new target machine hardware, then in theory doing just a straight copy of the VM file without any "restoring" should be much, much faster. I'll try that next.

-

@NeverDie have you tried proxmox's live migration feature? It works very well for me. Downtime is less than a second when migrating from a datacenter in Germany to a Datacenter in Finland. Migration time depends on network speed and VM size.

-

Reporting back: I'd like to find a BTRFS equivalent to ProxMox, because although I like the reliability that ZFS can, in theory, offer, it's simply not a native linux file system. As a result, if I want to backup VM's to a zpool dataset on, say, a truenas server using ProxMox Backup Server, I have to first reserve a giant fixed size chunk of storage on a Truenas ZFS dataset by creating a ZVOL on it, and then I have to overlay that ZVOL with a linux file system like ext4 so that ProxMox Backup Server can read/write the backups on the ZVOL using iSCSI. However, I've got to believe that doing it this way largely undermines the data integrity that ZFS is offering, and it also means I have a big block of reserved ZVOL storage to handle the worst-case that will be largely underutilized compared to if ProxMox could read/write ZFS datasets natively.

So, instead of doing that, which I did get to "work", I've decided to set up a regular linux ext4 logical volume on an NVME accessible to ProxMox for storing VM's and creating VM backups, because ProxMox has no trouble reading/writing to an ext4 file system. I have ProxMox write its backups to that linux logical volume, and then I copy those relatively small files (well, small compared to the giant ZVOL I would otherwise have to reserve to contain them) over to a ZFS dataset on a TrueNas server (which is running inside a VM on ProxMox).

I don't yet know for sure, but I presume (?) BTRFS wouldn't require these kinds of contortions, since BTRFS should be native to the Linux operating system, just like ext4 is.

Footnote: The above isn't entirely accurate, because I can and do boot proxmox itself on a raidZ1, and ProxMox can happily write and backup VM's to that ZFS drive. However, the gotcha is that, for some reason, when writing directly to a ZFS file system, ProxMox insists on writing both the VMs and VM backups as raw block files instead of a much more compact qcow2. Ironically, if writing to an ext4 file system, Proxmox can write both VM's and VM backups in the qcow2 file format. So, a VM of, say, PopOS might occupy 3GiB as a qcow2 file, but ProxMox would write it as a 32GiB raw file, if that's the size I told ProxMox to allocate when I configured the VM. Or 64Gib if I wanted to cover a possible larger future PopOS configuration. That kind of size discrepancy adds up in a hurry as you create, backup, and manage more and more VM's. I thought automatic file compression might make it a moot point, but it doesn't seem to work as well on raw files as I would have guessed, maybe due to file leftovers within the raw block as files are moved around or shrunk or "deleted" within the disk image. A file system wouldn't see those remnants, but they'd still be in a disk image, and automatic compression on a raw disk image has no way of knowing what's purely leftover junk file remnants vs essential file data that's still in use.

Or perhaps the difference is at least partly because a Linux LVM specifically allows for thin provisioning?

Thin Provisioning A number of storages, and the Qemu image format qcow2, support thin provisioning. With thin provisioning activated, only the blocks that the guest system actually use will be written to the storage. Say for instance you create a VM with a 32GB hard disk, and after installing the guest system OS, the root file system of the VM contains 3 GB of data. In that case only 3GB are written to the storage, even if the guest VM sees a 32GB hard drive. In this way thin provisioning allows you to create disk images which are larger than the currently available storage blocks. You can create large disk images for your VMs, and when the need arises, add more disks to your storage without resizing the VMs' file systems. https://pve.proxmox.com/wiki/StorageOr maybe it's just the arbitrary current state of ProxMox development, and this difference will get fixed in the future? I have no idea.

I'm not really expecting anyone here knows the answer, so this is mostly just a follow-up as to how things went. Although not ideal, I can make do with the workaround I've outlined in this post.

-

@NeverDie yep, ZFS is a strange beast for me. I tried to avoid it, because I don't have no hardware nor application to utilize it's potential. But I was also afraid of LVM, and then one time I had to recover proxmox setup that I've messed up when was trying to migrate it to a bigger drive without reinstall. At one moment I thought that I've broken the whole volume group, but somehow I've got it back after few rebuilds from semi-broken backup image

It reminds me the name of this great movie:

-

Reporting Back: Good news! Rather than use iSCSI, I found that I could create a SAMBA mount point and then use that to connect with a ZFS dataset on TrueNAS, running on a virtual machine within ProxMox. This allows ProxMox to backup VM's to that as a regular ZFS dataset without having to pre-allocate storage, as was the case with a ZVOL. I've tested this out, and it works. So, it's all good now. I'm once again happy with Proxmox.

BTW, running a SAMBA share has the added advantage of being easily accessible from Windows File Explorer, making it an attractive bonus. Once set up, I'm finding that SAMBA (aka SMB) on TrueNAS for a mix of Linux and Windows machines on one network provides easy file sharing.

An unrelated but good find: BTRFS on regular linux works great, especially when combined with TimeShift for instant snapshot and rollbacks. A rollback does require a reboot to take effect, but it's nonetheless better than no rollback ability at all.. BTRFS is one of the guided install options on both Ubuntu and Linux Mint Cinnamon. For Microsoft Windows users, I think you'll find that the GUI on Linux Mint Cinnamon is a good match for your Windows intuitions. I'll probably settle on Mint Cinnamon for its stability and intuitive ease of use. The pre-configuring that went into Mint makes it much easier for new Linux users to pick up and immediately start using, as compared to a plain vanilla Debian install, which was my previous go-to because of Debian's high stability.

Of greater relevance: "Viritual Machine Manager" (VMM) is a QEMU-KVM hypervisor GUI that runs on Mint which looks strikingly similar to ProxMox during the process of configuring a new VM. I was looking into VMM as a BTRFS hypervisor alternative to ZFS ProxMox, and though not exactly apples-to-apples, on first-look VMM does look quite capable. Also, in some ways the VMM GUI looks both more detailed and more polished than ProxMox's GUI. I also looked very briefly (maybe too briefly) at Cockpit and at Gnome Boxes, but my first-impression was both looked like work-in-progress. Are there any other hypervisors, not already mentioned on this thread, that runs on top of BTRFS and is worth considering?

-

Since prices for both GPU's and Ryzen 9 5950x CPU's have gone crazy, I ended up ordering a Ryzen 5700G, which is 65w nominal. CPU performance is a little less than the 5800x (at 105w), but the 5700G is priced about the same and has integrated graphics. I'd like to use the 5700G as the host for virtual machines, and I'm wondering now whether the integrated graphics gets shared among multiple virtual machines or whether at most one VM can make use of it. Offhand, I'm not sure how I would even test for that, except maybe with some kind of graphics benchmark.

-

I've been looking at building a new server myself.. Currently I have an old hp desktop machine with an I5 / 16Gb of ram, running as server.. Works fine (and it was cheap). But I want to play more with docker / kubernetes, and perhaps do some more machinelearning on zoneminder images, so could use a cpu with a bit more oompf.. Perhaps an nvidia gfx for the ML part..

Only problem is that I'm not ready to spend 1000$ or more on hardware, so I'll probably just wait for the prices to drop again..

(Currently I just run ubuntu server, and then docker on top of that.. No VMs or the like..)

-

@tbowmo I have Nvidia GT1030 running yolo object detection on camera feeds with 7fps on full model and 20-30fps on yolo-tiny. Considering you don't need to process every frame your cameras produce and 1 or even 0.5 fps per camera is practical enough, you can get this thing going on a budget.

-

@tbowmo For the machine learning part I bet there are cloud based RTX cards you could use, or perhaps AI cloud servers. Last I checked, which was a while ago, Google was offering tensorflow in the cloud for free. I'm using nvidia Now, which is meant for gaming, but as a data point it offers access to a dedicated RTX3080 on a VM for a mere $10 per month. That's a trivially low price compared to buying physical RTX3080 hardware at current market rates.

-

@NeverDie i prefer to keep video footage local. Not saying that I need an rtx3080/3090. They're too pricey. Looking more towards 1050ti or the like. Should be enough, if I'm not going for coral tpu. Waiting for a friend to get hold on some tpus, and give his verdict.

-

@monte currently I'm at the order of 0.2fps, would be nice with a bit more throughput, as I'm planning for more cameras along the way. (currently I have 2 cameras running 24/7,but have 2 more that I just need to put up..)

-

@tbowmo I don't know your setup, but optimizing the code that serves frames to the detector can help more then you may think.

-

@monte said in Best PC platform for running Esxi/Docker at home?:

@tbowmo I don't know your setup, but optimizing the code that serves frames to the detector can help more then you may think.

I'm using zoneminder, and zmeventserver for this.. All running in docker at the moment.. Haven't had the time for digging in to this machine learning, other than scratch the surface, and trying to apply prior work to my own setup..

-

The ryzen 9 5900x seems to be the sweet spot of bang per buck (lots of hefty cores for the price), so I've put an order in for one of those as well.[Edit: I canceled my ryzen 9 5900x order, which seemed perpetually backordered, and decided to buy a ryzen 9 5950x at scalper's prices instead to get it more quickly. Now I understand how the disproportionately high price of the ryzen 9 5950x (as compared to the ryzen 9 5900x) can be justfied: when you take into account the full system cost, rather than just the CPU cost in isolation, the marginal cost per core of the ryzen 9 5950x becomes more or less a wash as compared to the marginal cost per core of the ryzen 9 5900x, if it were underpinned by the same motherboard and memory. If the total sytem cost is even higher (if, say, it's a NAS or running video cards), then the marginal cost per core of the ryzen 9 5950x becomes lower than a 5900x, even considering scalper pricing on the CPU itself.

I'll be putting the Ryzen 9 5950x on an ASUS X570 workstation motherboard that comes with 3 PCIe 4.0 slots, where the board's bios is advertised as being capable to divvy up the various pcie lanes in a somewhat flexible manner. So, that should facilitate assigning different graphics cards to different VM's, or possibly adding 10Gbe ethernet and/or a SATA controller with no interference between devices. It's the only motherboard I found with these programmable bifurcation features that also supports ECC memory, though the Asrock X570D4I-2T may have something similar and also looks like a good board, though possibly a bit cramped.

Something I want to try: using bifurcation to add more than one nvme card in parallel to get higher throughput. From what I've read, it should be possible to get more than 20gbps. Maybe 28gbps read speed if four pcie 4.0 nvme cards are used in a single pcie 4.0 slot using an inexpensive adapter board? And would I notice significantly faster VM load times with that approach, assuming a VM is somehow spread over the four nvme cards using one of the ZFS raid configurations?]

-

I'm fairly happy with ProxMox, but FreeBSD's Bhyve hypervisor can clone (or snapshot) a VM in less than a second--even if running on a 10 year old laptop computer--whereas ProxMox cannot (even if, as I have done, I install ZFS as the file system everywhere used by ProxMox). Is it because the FreeBSD ZFS is either more complete or more tightly integrated? Anyone here done a compare/contrast of Bhyve vs ProxMox? I'm also intrigued because FreeBSD/Bhyve has "jails" for added security of VM's. Also, FreeBSD is a more complete OS, and so I suspect the entire thing is better scrubbed than the way Linux distros are thrown together, with each distro incorporating different packages.

Apple built its current OS on top of FreeBSD rather than Linux, so that's at least a minimal endorsement of FreeBSD, even if it were to turn out that the main reason Apple chose FreeBSD over Linux was due to FreeBSD's more favorable licensing terms.

-

@NeverDie said in Best PC platform for running Esxi/Docker at home?:

FreeBSD is a more complete OS, and so I suspect the entire thing is better scrubbed than the way Linux distros are thrown together

Haven't you heard the last controversy about wireguard driver merged into FreeBSD core by Pfsense, which had awful quality and was written by some pretty shady person? And it was only a few day before the new release of FreeBSD, when the author of wireguard wrote a letter about it and stopped the commit from being released with the kernel.

https://arstechnica.com/gadgets/2021/03/in-kernel-wireguard-is-on-its-way-to-freebsd-and-the-pfsense-router/

Can't find the long story I've read about it, but this article can explain the matter good enough.

I would say that broader adoption and even segmentation in some way, help more to make robust opensource OS.

-

OK, reporting back: it turned out to be a wild goose chase all because at time index 3:10 this guy:

FreeBSD's Bhyve Overview: Why it's better than other hypervisors. At least for our use-case. – 16:46

— Gateway IT Tutorialsappeared to demonstrate that he could clone a VM using Bhyve in about 1 second on a 10-year old laptop, whereas proxmox was taking him around 2 minutes to clone a VM. That's the claim that sent me off on this red herring.

Well, after digging into it, I've come to the conclusion that he wasn't truly cloning a VM but rather cloning a containerized VM. That option is also available in proxmox.

Either way, I'm hoping that when my Beast computer (above) is set up then ProxMox will be able to do rapid clone clones of either type, and that's why I'm pushing toward the high end specs, especially with regard to pushing ultra high nvme read and write speeds over pcie-4.0 using some kind of RAID configuration. If I can clone a VM in about a second, then for my purposes usability improves tremendously.

Also, it turns out that Trunas "jails" are apparently just the FreeBSD equivalent of linux or docker containers, so after realizing that I'm skeptical as to whether they offer any actual improvement in security vs what proxmox or linux offers.

-

@NeverDie may I ask, why do you need such speeds? How frequent are you going to clone VM's and how many of them are you going to have? Is this a home setup?

-

@monte said in Best PC platform for running Esxi/Docker at home?:

@NeverDie may I ask, why do you need such speeds? How frequent are you going to clone VM's and how many of them are you going to have? Is this a home setup?

I'm merely interesting in trying out something similar to Qubes OS--perhaps approximated using ProxMox--which on dated hardware I found was just too slow at spawning/cloning new VM's to be practical. I figure a one second delay for new spawns would be tolerable. Not sure where the exact cut-off of tolerability would be, but I figure one second probably wouldn't be too bothersome.

Also, sharpening the language of my earlier post, the TL;DR was that I'm now pretty confident the guy in the video was creating "linked clones" in Bhyve rather than "full clones," which is fine, but this wasn't clear in the video, which seems to have confusingly compared Bhyve linked-clone spawn times to ProxMox full-clone spawn times--which, of course, made Bhyve appear far better than it actually is.

I wasn't fully aware of "linked clones" previously, and they maybe are a good way to go, especially if you have a kind of "golden image" VM from which to spawn linked clones afterward. Proxmox can do linked clones too, not just Bhyve. Not entirely sure what the security ramifications are of using linked clones as opposed to full-clones though. I suppose full-clones would be the better of the two, but linked-clones might (?) be an acceptable trade-off in exchange for greatly reduced spawn times. Either way, admittedly, it may be overkill.

-

Closing-the-loop: https://looking-glass.io/ looks cool for tying it all together without sacrificing latency in user interaction. With pcie-5.0 deploying in the second half of this year, you'll get nvme's with even higher throughput. We're at one of those magic points in time where a lot of technology trends are coming together and reinforcing/leveraging each other. It's all pretty awesome.

-

Reporting back: being careful to setup everything right with the most current ProxMox on new hardware with a Ryzen 5700G and ZFS on root, it takes less than 0.1 second to create a linked clone of a virtual machine, as reported by Proxmox's task log. Size of the VM doesn't seem to matter. Same for snapshots.

So, at least for me, that settles the debate. Due to greater familiarity, and a more complete GUI than bhyve on Trunas, I've decided to stick with ProxMox.

As to why creating full-clone VM's takes so much longer than it seems like it should, I did manage to learn something new: on this fresh hardware, which uses nvme for storage, it turns out that creating a full clone of a VM is very much CPU bound rather than storage speed bound. Using htop to gain insight, I found that creating a full-clone pushes all 8 of the ryzen cores pretty nearly to maximum, even with automatic ZFS file compression and encryption both turned off. Running some tests, I found that the time taken to create a full clone is a function of both virtual disk size as well as how "full" that disk image is with the files inside it. So, for whatever reason, creating a full clone apparently involves more than just making a straight bit-for-bit copy of the VM's virtual disk image, and apparently that's why it ends up taking so long. Given this, I'm doubtful there's a workaround tthat would speed up the creation of full clones, but if anyone knows of one, or a different approach, then please do post a comment. Apparently the one thing that probably would help is using a CPU with more cores, like a Ryzen 9 5950x or a threadripper, since it' the CPU that's the chokepoint and the htop insight suggests that even more cores could be used in parallel to speed up the full-clone process. Perhaps the good news is that there's no need to build a super fast 20GBps+ nvme storage array, as faster storage alone isn't going to make a difference in the time it takes to create a full-clone.

Meanwhile I'll change my workflow to using more VM snapshots and fewer VM full-clones.

Lastly, being able to create a linked-clone in < 0.1 second means that it should be quite convenient to use "throwaway" linked-clone VM's for internet browsing for greater security against malware incursion. This is much faster than Qubes OS was able to spawn its disposable browser VM's. Getting to a 1 second full-clone of a VM might yet be possible using a very small, special-purpose browser distro. Judging from recent measurements, possibly sufficient would be a browser distro about one tenth the size of the linux lite distro plus maybe a more powerful CPU. BrowserLinux is just 96MB in size, so it might conceivably reach the objective even without a faster CPU. Unfortunately, it was last updated in 2014, so I'm guessing it's probably full of security holes....

-

@NeverDie make sure you enable the ssd option on the virtual disk in proxmox, and that the partitions are mounted with the discard option. That makes backups much smaller and faster (because deleted data doesn't need to be copied), so it will probably affect full cloning as well. You can also use the command line tool

fstrimto manually discard unused data.

-

@mfalkvidd said in Best PC platform for running Esxi/Docker at home?:

@NeverDie ...You can also use the command line tool

fstrimto manually discard unused data.Looking into this, I think checking the "Discard" box on the "Hard Disk" tab of the "Create a Virtual Machine" dialog box inside ProxMox may automatically accomplish the same thing. Also the ProxMox dataset that the VM is saved on needs to be set to thin-client. So, those two things plus the ssd emulation that you mentioned, and of course automatic file compression like lz4 needs to be enabled. So, in total, four things need to be set correctly for it to work optimally.

Originally I was concerned that because, if using ZFS as the file system, ProxMox only allows storing a VM as a pre-allocated "raw" file (rather than as a qcow2 file as ProxMox would if ProMox were using a linux ext16 file system instead of ZFS) that the file would take up enormous space even if the raw file (i.e. the VM's virtual disk) is mostly empty. So, I did the experiment, and it turns out that is true if the ProxMox dataset isn't configured as "thin-client" or if automatic file compression isn't turned on, but fortunately the true size of the raw file does indeed shrink down if those two conditions are enabled.

So, having proved that to myself, I'll pass on the tip: I now create a VM's disk to be as large as I can imagine it would ever need to grow, and then I let the thin-client mechanism maintain the true size of the virtual disk to be only as large as what is actually necessary. This way I don't have to worry that I created too small a VM disk, or that the VM will later outgrow the disk size that was originally allocated for it.