RFM69 sensitivity vs packet loss

-

I want to share some measurements regarding RFM69 settings.

First the setup:

RFM69W, 868 MHz, on battery powered custom PCB node

sensitivity -102.5 dBm / BR 19.2kbps / RX BW 41.7 kHz, time window of measurement ~90s30 messages have been sent during this time window.

Now the number of different interrupts during this time window:28600 RX READY => RX READY every 3ms on avg

28571 Sync Addr Timeouts

17 SYNC ADDRThis shows that at least on this node there is a huge number of false RX Ready interrupts. (Happens after preamble has been received - including Automatic Gain Control) So the node thinks that it is receiving a preamble all the time (although this is only noise).

One point to note: In all RFM69 libraries that I know of the receive function only waits for the "Payload Ready" IRQ. Now actually this means that all RFM69 are broken by design.

Why? Because If you have an imperfect sensitivity setting, and only wait for Payload Ready IRQs the preamble you receive will be wrong (it won't be the one from your sender). If you now use AFC or AGC (AGC is on by default) they will be wrong or only correct by chance because they rely on a preamble from the right sender. It's then left up to your luck whether AGC has adjusted to the right value.The reason why this broken design does not show up prominently is that

a) you're living in an environment where Radio hasn't been invented yet - or there are just no other senders that are triggering your receiver erroneously

b) you're using ACKs and thereby "hiding" the actual broken design (by retrying something that should have been received in the first place)Coming back to the figures:

If I reduce sensitivity to -100 dBm, the number of RX READY interrupts within ~60 seconds goes down to ~800 (from almost 20k). -

I want to share some measurements regarding RFM69 settings.

First the setup:

RFM69W, 868 MHz, on battery powered custom PCB node

sensitivity -102.5 dBm / BR 19.2kbps / RX BW 41.7 kHz, time window of measurement ~90s30 messages have been sent during this time window.

Now the number of different interrupts during this time window:28600 RX READY => RX READY every 3ms on avg

28571 Sync Addr Timeouts

17 SYNC ADDRThis shows that at least on this node there is a huge number of false RX Ready interrupts. (Happens after preamble has been received - including Automatic Gain Control) So the node thinks that it is receiving a preamble all the time (although this is only noise).

One point to note: In all RFM69 libraries that I know of the receive function only waits for the "Payload Ready" IRQ. Now actually this means that all RFM69 are broken by design.

Why? Because If you have an imperfect sensitivity setting, and only wait for Payload Ready IRQs the preamble you receive will be wrong (it won't be the one from your sender). If you now use AFC or AGC (AGC is on by default) they will be wrong or only correct by chance because they rely on a preamble from the right sender. It's then left up to your luck whether AGC has adjusted to the right value.The reason why this broken design does not show up prominently is that

a) you're living in an environment where Radio hasn't been invented yet - or there are just no other senders that are triggering your receiver erroneously

b) you're using ACKs and thereby "hiding" the actual broken design (by retrying something that should have been received in the first place)Coming back to the figures:

If I reduce sensitivity to -100 dBm, the number of RX READY interrupts within ~60 seconds goes down to ~800 (from almost 20k).Let's have a look @ some other setup.

Location: Vienna, close to center.

Acks: Not used.

Receiver: Orange Pi Zero with custom Gateway Hat with noise filtering (using mains power) with RFM69HW

Sender: Custom battery powered nodes with RFM69W

Same Radio settings as above, but sensitivity: -110 dBmI have measured packet loss from 5 sender devices over a time window of ~ 16,5 hours.

Device | Received | Missed | Packet Loss % A | 2000 | 28 | 1,38% B | 1978 | 27 | 1,35% C | 2002 | 45 | 2,20% D | 1970 | 26 | 1,30% E | 2000 | 25 | 1,23%A 2nd test showed similar results ranging from 0,91% to 1,74%.

I repeated the same test, this time, with a battery powered node as receiver - just to make sure it has nothing to do with noise on the power line.

The results were even slightly worse: ranging from 1,3% to 2,41% packet loss.Using the gateway as receiver again, I changed radio settings (BR 76,8 kbps, BW 125kHz, Whitening, changing sync word etc) but the figures were still not better.

So what happend when reducing sensitivity to -90 dBm? Still no substantial improvement.

Because RFM69 libraries are broken by design in a 2nd manner. It has to do with the way the transceiver works when listening for packets. It goes through multiple stages.

The problem is: If you only wait for "Payload Ready" the receiver can be caught in the wrong stage, just at the very moment when your sender is transmitting the message - in this case the receiver will miss the packet, because it was waiting for the end of the packet of the "wrong" sender.This is the reason for the packet loss.

After fixing this error by introducing timeouts, I have achieved the following figure:Received | Missed | Packet Loss % 2745 | 7 | 0,25%Same environment, same receiver, one the the same senders, same message frequency.

Before I had missed packets every hour. At maximum I would have 1 hour without missed packets. Now 5-6 hours can pass without a single missed packet. All figures are without ACKs/retransmissions. -

Let's have a look @ some other setup.

Location: Vienna, close to center.

Acks: Not used.

Receiver: Orange Pi Zero with custom Gateway Hat with noise filtering (using mains power) with RFM69HW

Sender: Custom battery powered nodes with RFM69W

Same Radio settings as above, but sensitivity: -110 dBmI have measured packet loss from 5 sender devices over a time window of ~ 16,5 hours.

Device | Received | Missed | Packet Loss % A | 2000 | 28 | 1,38% B | 1978 | 27 | 1,35% C | 2002 | 45 | 2,20% D | 1970 | 26 | 1,30% E | 2000 | 25 | 1,23%A 2nd test showed similar results ranging from 0,91% to 1,74%.

I repeated the same test, this time, with a battery powered node as receiver - just to make sure it has nothing to do with noise on the power line.

The results were even slightly worse: ranging from 1,3% to 2,41% packet loss.Using the gateway as receiver again, I changed radio settings (BR 76,8 kbps, BW 125kHz, Whitening, changing sync word etc) but the figures were still not better.

So what happend when reducing sensitivity to -90 dBm? Still no substantial improvement.

Because RFM69 libraries are broken by design in a 2nd manner. It has to do with the way the transceiver works when listening for packets. It goes through multiple stages.

The problem is: If you only wait for "Payload Ready" the receiver can be caught in the wrong stage, just at the very moment when your sender is transmitting the message - in this case the receiver will miss the packet, because it was waiting for the end of the packet of the "wrong" sender.This is the reason for the packet loss.

After fixing this error by introducing timeouts, I have achieved the following figure:Received | Missed | Packet Loss % 2745 | 7 | 0,25%Same environment, same receiver, one the the same senders, same message frequency.

Before I had missed packets every hour. At maximum I would have 1 hour without missed packets. Now 5-6 hours can pass without a single missed packet. All figures are without ACKs/retransmissions.@canique interesting info, but what is the actual point you're trying to make?

You say all rfm libraries are broken by design; do you have a suggestion to fix them?

You added timeouts; if you show people where, it becomes reproducible and people may benefit or even be able to improve on it. -

@canique interesting info, but what is the actual point you're trying to make?

You say all rfm libraries are broken by design; do you have a suggestion to fix them?

You added timeouts; if you show people where, it becomes reproducible and people may benefit or even be able to improve on it.There are 2 points I'm trying to make:

- the sensitivity setting (register 0x29) is very important. Setting it too low makes AGC (and AFC, but it's rarely used) completely useless. One could even think of setting AGC manually instead.

- The RFM69 chip is not smart enough to restart the RX procedure at the right point in time. It has some interrupts that tell you at which stage of the RX procedure it is currently. If you don't use these interrupts but follow the approach "I'll just wait for a Payload Ready IRQ" you will face packet loss.

There are multiple ways I can think of to fix this:

a) use the internal timeout setting (register 0x2B) of the RFM69 chip to set a timeout for the payload ready interrupt + listen to the RFM69 timeout interrupt (DIO0, DIO3 or DIO4 can be used) in your microcontroller. As soon as the timeout interrupt fires, the RX loop has to be restarted via register 0x3D (RegPacketConfig2).

b) same as above but more sophisticated: Instead of using the RFM69 internal timeout a timer of the MCU can be used for the task and more fine grained control is achievable via these interrupts (in the given order):

RxReady, SyncAddress, Fifolevel (you'd have to set Fifothreshold to some sane value like 3 bytes first), PayloadReady.

According to the bit time (called Tbit in the RFM69 documentation) every interrupt must occur within a certain time frame. E.g. if your SyncAddress is 2 bytes long (standard), the timeout must be at least 2x8xTbit time. That's the time needed to receive the SyncAddress after RxReady has fired. But it's a bit more complicated than that, because afaik RxReady can fire while still receiving the preamble (e.g. 5 byte preamble, but RxReady firing after 2 bytes). This is dependent on whether AFC/AGC are used or not and explaind in the documentation.

If there is a timeout detected at any stage -> RxRestart like in a).Additionally [valid for a) + b)] the sensitivity has to be tuned in such a way, that there are not thousands and thousands of false preambles within a short time. Or to put it in other words: The sensitivity must be above the noise level.

The advantage of b) over a) is that the RX loop can be aborted at an earlier stage without having to wait the full "timeout" time.

-

There are 2 points I'm trying to make:

- the sensitivity setting (register 0x29) is very important. Setting it too low makes AGC (and AFC, but it's rarely used) completely useless. One could even think of setting AGC manually instead.

- The RFM69 chip is not smart enough to restart the RX procedure at the right point in time. It has some interrupts that tell you at which stage of the RX procedure it is currently. If you don't use these interrupts but follow the approach "I'll just wait for a Payload Ready IRQ" you will face packet loss.

There are multiple ways I can think of to fix this:

a) use the internal timeout setting (register 0x2B) of the RFM69 chip to set a timeout for the payload ready interrupt + listen to the RFM69 timeout interrupt (DIO0, DIO3 or DIO4 can be used) in your microcontroller. As soon as the timeout interrupt fires, the RX loop has to be restarted via register 0x3D (RegPacketConfig2).

b) same as above but more sophisticated: Instead of using the RFM69 internal timeout a timer of the MCU can be used for the task and more fine grained control is achievable via these interrupts (in the given order):

RxReady, SyncAddress, Fifolevel (you'd have to set Fifothreshold to some sane value like 3 bytes first), PayloadReady.

According to the bit time (called Tbit in the RFM69 documentation) every interrupt must occur within a certain time frame. E.g. if your SyncAddress is 2 bytes long (standard), the timeout must be at least 2x8xTbit time. That's the time needed to receive the SyncAddress after RxReady has fired. But it's a bit more complicated than that, because afaik RxReady can fire while still receiving the preamble (e.g. 5 byte preamble, but RxReady firing after 2 bytes). This is dependent on whether AFC/AGC are used or not and explaind in the documentation.

If there is a timeout detected at any stage -> RxRestart like in a).Additionally [valid for a) + b)] the sensitivity has to be tuned in such a way, that there are not thousands and thousands of false preambles within a short time. Or to put it in other words: The sensitivity must be above the noise level.

The advantage of b) over a) is that the RX loop can be aborted at an earlier stage without having to wait the full "timeout" time.

@canique I don't have much experience with rfm, but i definitely would prefer a solution that doesn't require a timer on the cpu side (so option a). Especially on the popular atmega 328 timers are scarce.

Could you prepare a pull request with your suggestions, so people can try it out? -

@canique I don't have much experience with rfm, but i definitely would prefer a solution that doesn't require a timer on the cpu side (so option a). Especially on the popular atmega 328 timers are scarce.

Could you prepare a pull request with your suggestions, so people can try it out? -

@Yveaux alas I can't due to lack of time. But maybe somenbody has spare time to tackle this.

Just to give a few numbers...

Condition: Bitrate 76800 bps / RX BW 125000

I could measure the following numbers:

Sensitivity | number of RX Ready per minute

-101 dBm | 4500

-100,5 dBm | 1300

-100 dBm | 1100

-99 dBm | 800If set to -99 dBm, packet loss is small.

But today evening, the number of RX Ready events went up significantly. Packet loss went up too.Reason: I switched on my television. Distance to Gateway ~30cm.

So a TV in the vicinity of the Gateway has a tremendous effect on sensitivity.I ran a similar test some days ago with a sensitivity of -90 dBm and did not notice any packet loss then.

-

Just to give a few numbers...

Condition: Bitrate 76800 bps / RX BW 125000

I could measure the following numbers:

Sensitivity | number of RX Ready per minute

-101 dBm | 4500

-100,5 dBm | 1300

-100 dBm | 1100

-99 dBm | 800If set to -99 dBm, packet loss is small.

But today evening, the number of RX Ready events went up significantly. Packet loss went up too.Reason: I switched on my television. Distance to Gateway ~30cm.

So a TV in the vicinity of the Gateway has a tremendous effect on sensitivity.I ran a similar test some days ago with a sensitivity of -90 dBm and did not notice any packet loss then.

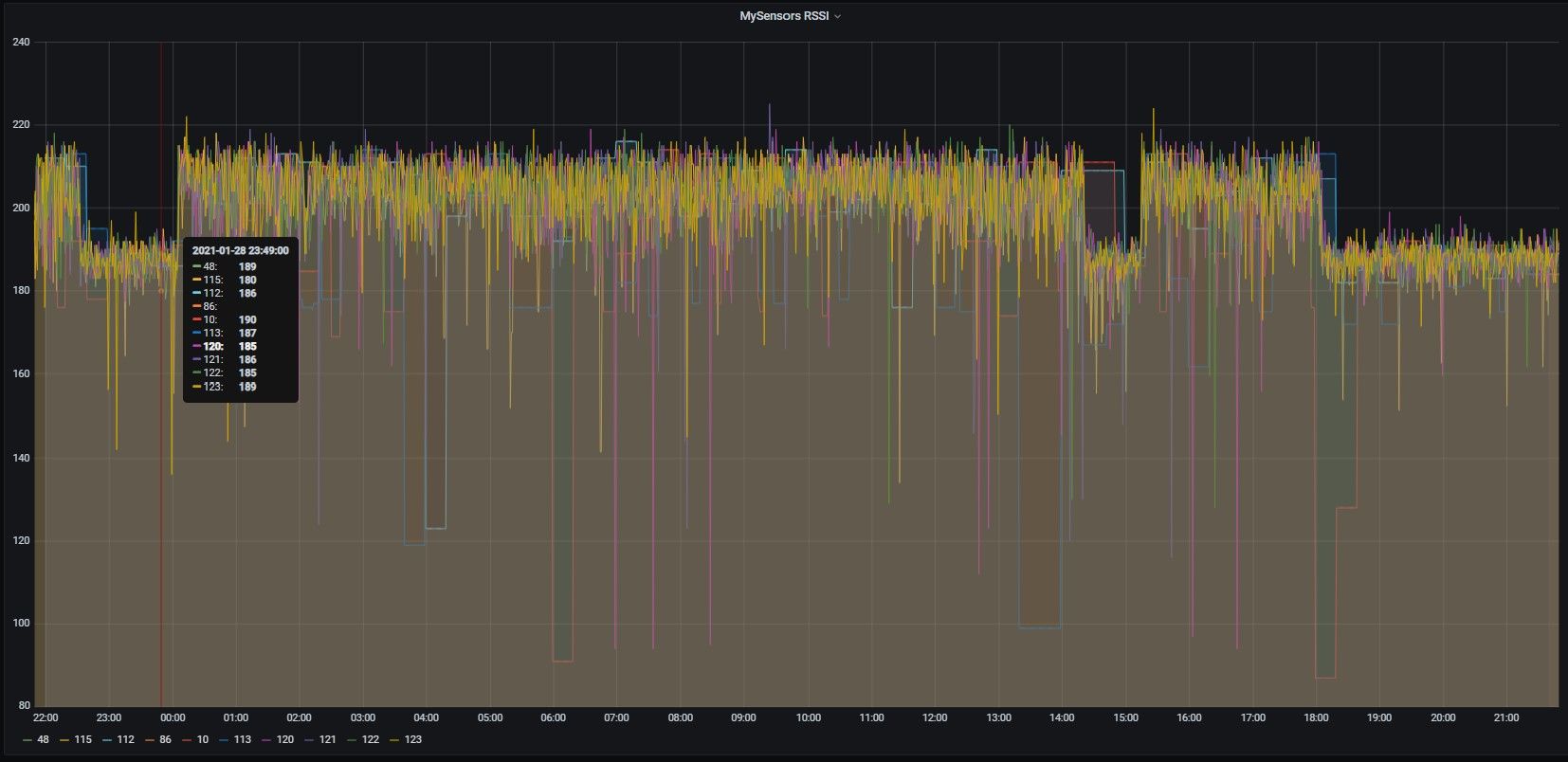

I also have the gateway near the TV (behind it) with the antenna 30-40 cm below it.

The RSSI value drops for all nodes and this drop can successfully be used to detect tv on :D . With the magnetic antenna on tv's support, communication stops working even for nodes 4 m away, in the same room.

-

I have gathered data in a LibreOffice table...

Sensitivity vs RX Ready at different bit rates

The gist of it is:

@19.2 kbps I was able to achieve -105 dBm with low noise (low number of false RX Ready interrupts)@25 kbps -104 dBm

@4.8 kbps -110 dBm

@40 kbps -99 dBm

@76.8 kbps -99 dBm@alexelite I guess you'd have to reduce sensitivity to below -90 dBm, maybe -85 dBm, for things to work while TV is on.

-

How is it that you're increasing your receive sensitivity? RxReady just means that the RSSI Threshold has been met and that the SX1231 can start listening for a preamble. See figures on page 39 of the SX1231 datasheet: https://semtech.my.salesforce.com/sfc/p/#E0000000JelG/a/44000000MDkO/lWPNMeJClEs8Zvyu7AlDlKSyZqhYdVpQzFLVfUp.EXs It doesn't mean that that a matching preamble has been found, nor does it necessarily mean that a matching preamble will be found in the time allowed. So, naturally, if you reduce the RSSI threshhold, you're can get more more Rx Ready events just from noise in the environment.

I don't see what the problem is. Unless you're trying to indirectly measure the noise level, your program should pay attention to packetready events, not rxready events. In packet mode, there's a fixed window between rxready and timing out in which to receive a valid packet, so a stream of rxready events could just mean a stream of timed-out receive windows triggered by ambient noise, not a series of received preambles. In contrast, PacketReady is triggered only on frames that pass all the filtering that's used to determine whether a genuine packet has been received. There's always a chance for false positives, but the number of occurrences can be reduced to practically never depending upon how much you're willing to use longer packet addresses, data whitening, longer data payloads with CRC turned-on, etc.

Hope that helps. Good luck!

-

How is it that you're increasing your receive sensitivity? RxReady just means that the RSSI Threshold has been met and that the SX1231 can start listening for a preamble. See figures on page 39 of the SX1231 datasheet: https://semtech.my.salesforce.com/sfc/p/#E0000000JelG/a/44000000MDkO/lWPNMeJClEs8Zvyu7AlDlKSyZqhYdVpQzFLVfUp.EXs It doesn't mean that that a matching preamble has been found, nor does it necessarily mean that a matching preamble will be found in the time allowed. So, naturally, if you reduce the RSSI threshhold, you're can get more more Rx Ready events just from noise in the environment.

I don't see what the problem is. Unless you're trying to indirectly measure the noise level, your program should pay attention to packetready events, not rxready events. In packet mode, there's a fixed window between rxready and timing out in which to receive a valid packet, so a stream of rxready events could just mean a stream of timed-out receive windows triggered by ambient noise, not a series of received preambles. In contrast, PacketReady is triggered only on frames that pass all the filtering that's used to determine whether a genuine packet has been received. There's always a chance for false positives, but the number of occurrences can be reduced to practically never depending upon how much you're willing to use longer packet addresses, data whitening, longer data payloads with CRC turned-on, etc.

Hope that helps. Good luck!

@NeverDie said in RFM69 sensitivity vs packet loss:

How is it that you're increasing your receive sensitivity?

When I say "sensitivity setting" I mean the RSSI threshold setting in register 0x29 as posted above.

@NeverDie said in RFM69 sensitivity vs packet loss:

RxReady just means that the RSSI Threshold has been met and that the SX1231 can start listening for a preamble. See figures on page 39 of the SX1231 datasheet

The bold part of your statement is plain wrong. RxReady means that enough preamble (or any other data like noise) has been received to read RSSI and optionally tune AGC/AFC. [This is why -if you set the wrong RSSI Threshold- you permanently get RxReady interrupts.]

The preamble is there to help adjust the AGC/AFC. That's the purpose of the preamble. So your preamble must be sent before the RxReady interrupt, not after it like you claim. Of course you can send the preamble after the RxReady interrupt (like you can pour soup over your salad) but then you have a potentially wrong AGC/AFC setting. It's just not meant to be.@NeverDie said in RFM69 sensitivity vs packet loss:

It doesn't mean that that a matching preamble has been found, nor does it necessarily mean that a matching preamble will be found in the time allowed.

This is true. RxReady will also fire on noise. The formulas given on the page you refer to more often than not suggest ~14 bits of data for RxReady to fire (with AGC enabled, AFC disabled). This data can stem from noise. This is why it is important to keep the RSSI threshold above the noise floor. I'm not sure whether you got me right regarding my goal: I'm not trying to improve sensitivity. I'm trying to reduce packet loss to a minimum.

@NeverDie said in RFM69 sensitivity vs packet loss:

In packet mode, there's a fixed window between rxready and timing out in which to receive a valid packet, so a stream of rxready events could just mean a stream of timed-out receive windows triggered by ambient noise, not a series of received preambles.

Are you referring to RegRxTimeout2 (register 0x2B)? By default it is not set.

And iirc I only get this series of RxReady events if I restart the RX loop. Otherwise RxReady will stay set. -

@NeverDie said in RFM69 sensitivity vs packet loss:

How is it that you're increasing your receive sensitivity?

When I say "sensitivity setting" I mean the RSSI threshold setting in register 0x29 as posted above.

@NeverDie said in RFM69 sensitivity vs packet loss:

RxReady just means that the RSSI Threshold has been met and that the SX1231 can start listening for a preamble. See figures on page 39 of the SX1231 datasheet

The bold part of your statement is plain wrong. RxReady means that enough preamble (or any other data like noise) has been received to read RSSI and optionally tune AGC/AFC. [This is why -if you set the wrong RSSI Threshold- you permanently get RxReady interrupts.]

The preamble is there to help adjust the AGC/AFC. That's the purpose of the preamble. So your preamble must be sent before the RxReady interrupt, not after it like you claim. Of course you can send the preamble after the RxReady interrupt (like you can pour soup over your salad) but then you have a potentially wrong AGC/AFC setting. It's just not meant to be.@NeverDie said in RFM69 sensitivity vs packet loss:

It doesn't mean that that a matching preamble has been found, nor does it necessarily mean that a matching preamble will be found in the time allowed.

This is true. RxReady will also fire on noise. The formulas given on the page you refer to more often than not suggest ~14 bits of data for RxReady to fire (with AGC enabled, AFC disabled). This data can stem from noise. This is why it is important to keep the RSSI threshold above the noise floor. I'm not sure whether you got me right regarding my goal: I'm not trying to improve sensitivity. I'm trying to reduce packet loss to a minimum.

@NeverDie said in RFM69 sensitivity vs packet loss:

In packet mode, there's a fixed window between rxready and timing out in which to receive a valid packet, so a stream of rxready events could just mean a stream of timed-out receive windows triggered by ambient noise, not a series of received preambles.

Are you referring to RegRxTimeout2 (register 0x2B)? By default it is not set.

And iirc I only get this series of RxReady events if I restart the RX loop. Otherwise RxReady will stay set.@canique said in RFM69 sensitivity vs packet loss:

The bold part of your statement is plain wrong. RxReady means that enough preamble (or any other data like noise) has been received to read RSSI and optionally tune AGC/AFC. [This is why -if you set the wrong RSSI Threshold- you permanently get RxReady interrupts.]

The preamble is there to help adjust the AGC/AFC. That's the purpose of the preamble. So your preamble must be sent before the RxReady interrupt, not after it like you claim. Of course you can send the preamble after the RxReady interrupt (like you can pour soup over your salad) but then you have a potentially wrong AGC/AFC setting. It's just not meant to be.Yes ,good clarification. I didn't say it right. The desired scenario would be where the preamble signal strength is much higher than the background noise and the RSSI threshold is set high enough that only the preamble triggers the rxReady, not the background noise.

By the way, why are there two RSSI sampling phases back-to-back (each of length Trssi)? What role doe the second RSSI sampling serve?